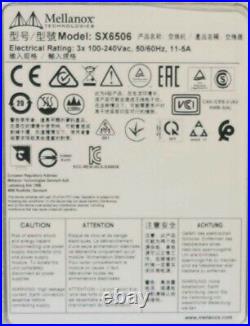

New Mellanox SX6506 MSX6506-NR 7RU Switch Chassis 1x Management Module 2x PSU

New Mellanox SX6506 MSX6506-NR 7RU Switch Chassis. New Open Box - Opened to verify contents. 1 x New Mellanox SX6506 MSX6506-NR 7RU Switch Chassis With.

1 x Management Module 105-575-018-00. 2 x Power Supplies 105-575-014-00.

1 x Rack Installation Kit -MTR005100. 1 x Hardware Installation Guide. 1 x Cable Management Bracket MEC002820. 1 x Cable Management Bracket MEC002821. 1 x Leaf Side Cable Supporter Kit (Qty:3) MTR006503.

Scaling-Out Data Centers with Fourteen Data Rate (FDR) InfiniBand. Faster servers based on PCIe 3.0 combined with high-performance storage and applications that use increasingly complex computations, are causing data bandwidth requirements to spiral upward. As servers are deployed with next generation processors, High-Performance Computing (HPC) environments and Enterprise Data Centers (EDC) will need every last bit of bandwidth delivered with Mellanox's next generation of FDR InfiniBand high-speed smart switches. Built with Mellanox's latest SwitchX® InfiniBand switch device, the SX6506 provides up to 108 56Gb/s full bi-directional bandwidth per port.

The SX6506 can scale as the number of nodes per cluster and the number of cores per node increase. This modular chassis switch provides an excellent price-performance ratio for medium to extremely large size clusters, along with the reliability and manageability expected from a director-class switch. The leaf, spine blades and management modules, as well as the power supplies and fan units, are all hot-swappable to help eliminate down time.

Why Software Defined Network (SDN)? Data center networks have become exceedingly complex.

IT managers cannot optimize the networks for their applications leading to high CAPEX/OPEX, low ROI and IT headaches. Mellanox InfiniBand SDN Switches ensure separation between control and data planes. InfiniBand enables centralized management and view of network. Programmability of the network by external applications and enable cost effective, simple and flat interconnect infrastructure. The SX6506 enables efficient computing with features such as static routing, adaptive routing, and congestion control. These features ensure the maximum effective fabric bandwidth by eliminating congestion hot spots. SX6506 comes with an onboard subnet manager, enabling simple, out-of-the-box fabric bring-up for up to 648 nodes. The MLNX-OST software delivers complete chassis management to manage the firmware, power supplies, fans, ports and other interfaces.MLNX-OS provides a license activated embedded diagnostic tool called Fabric Inspector to check node-to-node, node-to-switch connectivity and ensures the fabric health. The SX6506 can also be coupled with Mellanox's Unified Fabric ManagerT (UFMT) software for managing scale-out InfiniBand computing. UFM enables data center operators to efficiently provision, monitor and operate the modern data center fabric. UFM boosts application performance and ensures that the fabric is up and running at all times.

18 QSFP InfiniBand ports per blade, up to 56Gb/s per QSFP. Aggregate data throughput 12.1 Tb/s.

Compliant with IBTA 1.21 and 1.3. 4x48K entry linear forwarding table. QSFP+ connectors (SFF-8436 and INF- 8438i compliant).

Passive copper or active fiber cable. Per port status LEDs: Link, Activity. System, power supply and fans.

Frequency: 47-63Hz, single phase AC. VAT IS NOT PAYABLE BY PURCHASERS OUTSIDE OF THE UK. This item is in the category "Computers/Tablets & Networking\Enterprise Networking, Servers\Switches & Hubs\Network Switches".

The seller is "itinstock" and is located in this country: GB. This item can be shipped worldwide.- Model: SX6506

- Type: Infiniband Switch

- MPN: MSX6506-NR, 100-575-220-00, SX6506

- Brand: Mellanox

- Form Factor: 7U